Retrieval-Augmented Generation (RAG) has transformed how we build AI applications. While prototyping a RAG system is straightforward—chunk documents, embed them, store in a vector database, retrieve, and generate—taking it to production is an entirely different challenge. After building and deploying multiple RAG systems serving millions of queries, I've learned that the gap between a demo and a production system is vast and filled with subtle gotchas.

This post shares hard-won lessons on building RAG systems that actually work at scale.

The Core Challenges

Before diving into solutions, let's understand what makes production RAG hard:

Retrieval Quality vs. Latency Trade-offs: Users expect sub-second responses, but thorough semantic search across millions of documents takes time. Every millisecond counts when you're combining retrieval with LLM inference.

Context Window Management: Even with 100k+ context windows, you can't just dump everything. Token costs add up, and more context doesn't always mean better answers. Finding the right balance is critical.

Data Freshness: Your knowledge base isn't static. Documents change, new information arrives, and stale data leads to incorrect answers. Managing updates without full reindexing is essential.

Evaluation and Monitoring: Unlike traditional ML, RAG systems are harder to evaluate. There's no single metric that captures "good retrieval + good generation." You need comprehensive observability.

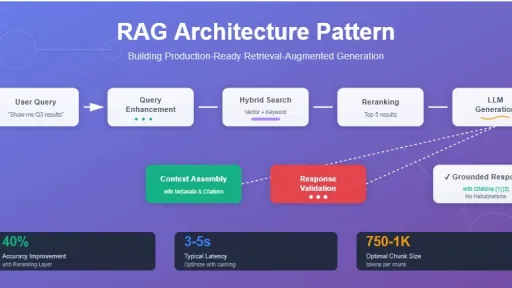

Architecture: Beyond the Basics

The Hybrid Search Approach

Pure vector search sounds elegant, but in production, hybrid search consistently outperforms:

# Pseudocode for hybrid search

def hybrid_search(query, alpha=0.7):

# Dense retrieval (semantic)

semantic_results = vector_db.search(

embed(query),

top_k=20

)

# Sparse retrieval (keyword)

bm25_results = elasticsearch.search(

query,

top_k=20

)

# Reciprocal Rank Fusion

combined = rrf_combine(

semantic_results,

bm25_results,

alpha=alpha

)

return combined[:10]Why hybrid works: Semantic search excels at conceptual matches but struggles with exact terms, product codes, or proper nouns. BM25 handles these perfectly. Reciprocal Rank Fusion (RRF) provides a robust way to combine rankings without tuning weights.

Pro tip: Set your alpha parameter per document type. Technical docs benefit from higher BM25 weight (0.4-0.5), while conceptual content works better with semantic-heavy weighting (0.7-0.8).

Chunking Strategy: Size Isn't Everything

The standard "chunk by 512 tokens with 50 token overlap" is rarely optimal. Here's what actually works:

Semantic chunking: Break on natural boundaries (headers, paragraphs, topic shifts) rather than arbitrary token counts. Use small embedding models to detect topic changes.

Parent-child relationships: Store small chunks for retrieval but provide larger context to the LLM:

class ChunkStore:

def __init__(self):

self.chunks = {} # Small searchable chunks

self.parents = {} # Full sections/documents

def retrieve(self, query, k=5):

# Retrieve small chunks

chunk_ids = self.vector_search(query, k)

# Return parent context

return [

self.parents[chunk.parent_id]

for chunk in chunk_ids

]Pro tip: For technical documentation, chunk at the subsection level but include the full section hierarchy in metadata. This gives the LLM critical context about where information lives.

Metadata: The Secret Weapon

Metadata-filtered retrieval dramatically improves precision:

python

# Rich metadata schema

metadata = {

"doc_type": "api_reference",

"version": "2.1.0",

"last_updated": "2025-01-15",

"language": "python",

"category": "authentication",

"confidence_score": 0.95

}

# Query with filters

results = vector_db.search(

query=embed(user_query),

filters={

"version": {"$gte": "2.0.0"},

"doc_type": {"$in": ["api_reference", "tutorial"]},

"confidence_score": {"$gte": 0.8}

}

)Critical metadata fields:

- Timestamps: Enable time-based filtering and freshness decay

- Source information: Help users verify and explore

- Version tags: Essential for technical documentation

- Confidence scores: Flag potentially outdated or uncertain content

- Access controls: Security at the metadata level

Prompt Engineering for RAG

The prompt matters more than you think. Here's a production-tested template:

prompt_template = """

You are a helpful assistant answering questions using the provided context.

CONTEXT:

{context}

RULES:

1. Only use information from the context above

2. If the context doesn't contain the answer, say "I don't have enough information to answer that"

3. Cite specific sections when making claims

4. If information seems outdated, mention the date from the metadata

USER QUESTION: {question}

RESPONSE:

"""Key lessons:

Explicit hallucination prevention: Models will confidently make things up if not explicitly told not to. The "only use context" instruction is essential.

Citation requirements: Forcing the model to cite sources improves accuracy and gives users confidence.

Handling ambiguity: Build in phrases for uncertainty. Users prefer "I'm not sure" over confident wrong answers.

Metadata awareness: Train your model to use timestamps and version info when relevant.

Evaluation: Measuring What Matters

Production RAG needs continuous evaluation. Here's a practical framework:

Retrieval Metrics

Hit Rate @ K: Does the correct document appear in top K results?

def hit_rate_at_k(queries, ground_truth, k=5):

hits = 0

for query, truth_doc_id in zip(queries, ground_truth):

results = retrieve(query, k)

if truth_doc_id in [r.id for r in results]:

hits += 1

return hits / len(queries)Mean Reciprocal Rank (MRR): How high do correct results rank?

Retrieval Latency: P50, P95, P99 latencies matter for user experience

Generation Metrics

Faithfulness: Does the answer stick to the retrieved context?

# Use an LLM as judge

faithfulness_prompt = """

Context: {context}

Answer: {answer}

Is this answer faithful to the context?

Reply with only YES or NO.

"""Relevance: Does it actually answer the question?

Citation Accuracy: Are cited sources actually used?

End-to-End Metrics

User Satisfaction: Thumbs up/down, follow-up questions

Task Completion: Can users accomplish their goals?

Retrieval-Generation Correlation: Are good retrievals leading to good answers?

Latency Optimization

Sub-second response times require optimization at every layer:

Caching Strategy

Implement multi-level caching:

class RAGCache:

def __init__(self):

self.embedding_cache = LRUCache(10000)

self.retrieval_cache = LRUCache(5000)

self.response_cache = TTLCache(1000, ttl=3600)

async def query(self, question):

# L1: Full response cache

if cached := self.response_cache.get(question):

return cached

# L2: Retrieval cache

embedding = self.embedding_cache.get(question)

if not embedding:

embedding = await embed(question)

self.embedding_cache[question] = embedding

# L3: Vector search results

docs = self.retrieval_cache.get(embedding)

if not docs:

docs = await vector_db.search(embedding)

self.retrieval_cache[embedding] = docs

# Generate and cache

response = await llm.generate(question, docs)

self.response_cache[question] = response

return responseAsync Everything

Parallelize independent operations:

async def retrieve_and_generate(query):

# Parallel retrieval from multiple sources

results = await asyncio.gather(

vector_db.search(query),

elasticsearch.search(query),

knowledge_graph.query(query)

)

# Rerank combined results

ranked = await reranker.rank(results)

# Stream generation

async for chunk in llm.stream(query, ranked):

yield chunkVector Database Optimization

Choose indexes wisely:

- HNSW: Best for recall, moderate build time

- IVF: Fast search, good for very large datasets

- DiskANN: Memory-efficient for billion-scale

Pro tip: Use approximate search (90-95% recall) with a fast reranker rather than exhaustive search. The reranker improves precision while keeping latency low.

Handling Updates and Freshness

Incremental Updates

Avoid full reindexing:

class IncrementalIndexer:

def update_document(self, doc_id, new_content):

# Mark old chunks as deleted

self.mark_deleted(doc_id)

# Add new chunks with version

new_chunks = self.chunk(new_content)

for chunk in new_chunks:

chunk.metadata['version'] = self.get_next_version()

chunk.metadata['parent_id'] = doc_id

self.index(chunk)

# Soft delete old versions after grace period

self.schedule_cleanup(doc_id, delay='1h')Temporal Decay

Newer information should be preferred:

def rerank_with_freshness(results, decay_rate=0.1):

now = datetime.now()

for result in results:

age_days = (now - result.metadata['last_updated']).days

freshness_penalty = exp(-decay_rate * age_days)

result.score *= freshness_penalty

return sorted(results, key=lambda x: x.score, reverse=True)Observability and Debugging

Production RAG systems need comprehensive monitoring:

Logging Strategy

@observe()

async def rag_query(question):

with trace("rag_pipeline") as t:

# Log inputs

t.set_attribute("question", question)

t.set_attribute("question_length", len(question))

# Retrieval

with trace("retrieval"):

docs = await retrieve(question, k=10)

t.set_attribute("num_retrieved", len(docs))

t.set_attribute("top_score", docs[0].score)

t.set_attribute("doc_ids", [d.id for d in docs])

# Generation

with trace("generation"):

response = await generate(question, docs)

t.set_attribute("response_length", len(response))

t.set_attribute("tokens_used", response.usage.total_tokens)

return responseKey Metrics to Track

Retrieval Health:

- Distribution of similarity scores

- Number of documents retrieved per query

- Cache hit rates

- Retrieval latency (P50, P95, P99)

Generation Health:

- Token usage distribution

- Response latency

- Citation rate

- "I don't know" frequency

System Health:

- Error rates

- Timeout rates

- Cost per query

- Throughput

Cost Optimization

RAG can get expensive fast. Here's how to control costs:

Smart Embedding Strategies

Not all queries need expensive embeddings:

class EmbeddingRouter:

def embed(self, text):

# Simple queries use small model

if len(text.split()) < 10:

return self.small_model.embed(text)

# Complex queries use large model

return self.large_model.embed(text)Context Pruning

Remove irrelevant content before LLM:

def prune_context(retrieved_docs, query, max_tokens=4000):

# Rerank for relevance

reranked = reranker.rank(query, retrieved_docs)

# Take top until token limit

pruned = []

token_count = 0

for doc in reranked:

doc_tokens = count_tokens(doc.content)

if token_count + doc_tokens > max_tokens:

break

pruned.append(doc)

token_count += doc_tokens

return prunedBatch Processing

For analytics queries, batch when possible:

async def batch_rag_queries(questions):

# Single embedding batch

embeddings = await embed_batch(questions)

# Parallel retrieval

all_docs = await asyncio.gather(*[

retrieve(emb) for emb in embeddings

])

# Batch generation

responses = await llm.batch_generate(

questions,

all_docs

)

return responsesSecurity Considerations

Production RAG handles sensitive data. Here's what to lock down:

Access Control

class SecureRAG:

async def query(self, question, user_context):

# Filter by permissions

results = await self.retrieve(

question,

filters={

"access_groups": {

"$in": user_context.groups

},

"security_level": {

"$lte": user_context.clearance

}

}

)

# Redact sensitive fields

sanitized = self.redact_pii(results)

return await self.generate(question, sanitized)PII Handling

Avoid storing PII in vector databases:

def anonymize_before_indexing(document):

# Detect and replace PII

doc = detect_and_replace_names(document)

doc = detect_and_replace_emails(document)

doc = detect_and_replace_phone_numbers(document)

# Store mapping separately (encrypted)

mapping = save_pii_mapping(document.id, replacements)

return docCommon Pitfalls and Solutions

Pitfall 1: Over-Retrieval

Problem: Retrieving 50+ documents and hoping the LLM figures it out.

Solution: Retrieve more (20-50), rerank aggressively, send top 5-10 to LLM.

Pitfall 2: Ignoring Document Structure

Problem: Treating all chunks equally regardless of their role.

Solution: Weight headings, summaries, and key sections higher. Include document structure in metadata.

Pitfall 3: Static Systems

Problem: Not adapting to changing data or user behavior.

Solution: Implement feedback loops, monitor query patterns, and regularly retune based on real usage.

Pitfall 4: Black Box Failures

Problem: RAG fails and you can't tell if it's retrieval or generation.

Solution: Comprehensive logging at each stage. Store retrieved docs, scores, and generation prompts.

Pitfall 5: Ignoring Edge Cases

Problem: System works on average but fails catastrophically on certain queries.

Solution: Build query classifiers to detect and handle special cases (ambiguous queries, multi-hop questions, time-sensitive queries).

Advanced Techniques

Once you've mastered the basics, consider these advanced patterns:

Query Expansion

Generate multiple query variants:

async def expanded_query(question):

# Generate alternative phrasings

expansions = await llm.generate(f"""

Generate 3 alternative ways to ask this question:

{question}

""")

# Retrieve with all variants

all_results = await asyncio.gather(*[

retrieve(q) for q in [question] + expansions

])

# Deduplicate and rerank

return deduplicate_and_rerank(all_results)Multi-Hop Reasoning

For complex questions requiring multiple lookups:

async def multi_hop_rag(question):

# Decompose into sub-questions

sub_questions = await decompose(question)

# Answer each sub-question

sub_answers = []

for sq in sub_questions:

docs = await retrieve(sq)

answer = await generate(sq, docs)

sub_answers.append(answer)

# Synthesize final answer

final = await synthesize(question, sub_answers)

return finalAdaptive Retrieval

Dynamically adjust k based on query complexity:

def adaptive_k(query):

complexity = estimate_complexity(query)

if complexity < 0.3: # Simple lookup

return 3

elif complexity < 0.7: # Moderate

return 10

else: # Complex synthesis

return 20Deployment Patterns

Serverless vs. Always-On

Serverless (AWS Lambda, Cloud Functions):

- Pros: Cost-effective for low traffic, auto-scaling

- Cons: Cold starts, limited execution time, smaller memory

Always-On (Kubernetes, ECS):

- Pros: Consistent latency, more control, better for high traffic

- Cons: Higher baseline cost, need to manage scaling

Recommendation: Start serverless, move to always-on as you scale past 1000 requests/hour.

Model Serving

For embedding and reranking models:

# Use model servers for better throughput

from ray import serve

@serve.deployment(num_replicas=3)

class EmbeddingService:

def __init__(self):

self.model = load_model("all-MiniLM-L6-v2")

async def embed(self, texts):

return await self.model.encode(texts)

serve.run(EmbeddingService.bind())Testing Strategy

RAG systems need comprehensive testing:

Unit Tests

def test_chunking():

doc = load_test_document()

chunks = chunk_document(doc)

# Validate chunk properties

assert all(len(c.text.split()) <= 512 for c in chunks)

assert all(c.metadata['parent_id'] == doc.id for c in chunks)

assert no_lost_content(doc, chunks)

def test_retrieval():

query = "How do I authenticate?"

results = retrieve(query, k=5)

assert len(results) == 5

assert all(r.score >= 0 and r.score <= 1 for r in results)

assert results[0].score >= results[-1].scoreIntegration Tests

@pytest.mark.integration

async def test_end_to_end():

question = "What's the API rate limit?"

response = await rag_query(question)

# Validate response structure

assert response.answer

assert response.citations

assert response.confidence > 0.5

# Validate answer quality

assert "rate limit" in response.answer.lower()

assert any("api" in c.text.lower() for c in response.citations)Golden Dataset Testing

Maintain a set of question-answer pairs:

def test_golden_dataset():

dataset = load_golden_dataset()

for item in dataset:

response = rag_query(item.question)

# Check semantic similarity

similarity = compute_similarity(

response.answer,

item.expected_answer

)

assert similarity > 0.8, f"Failed on: {item.question}"Real-World Performance Numbers

From production systems handling millions of queries:

Latency Targets:

- Embedding: < 50ms

- Vector search: < 100ms

- Reranking: < 150ms

- LLM generation: < 2s (streaming)

- Total P95: < 3s

Accuracy Benchmarks:

- Hit Rate @ 5: > 85%

- Faithfulness: > 95%

- User satisfaction: > 80%

Cost at Scale:

- Embedding: $0.0001 per query

- Vector search: $0.00005 per query

- LLM generation: $0.002-0.02 per query (varies by model)

- Total: $0.003-0.025 per query

Conclusion

Building production-ready RAG systems is about the unglamorous details: caching strategies, monitoring, error handling, and continuous evaluation. The sexy part—hooking up a vector database to an LLM—is maybe 10% of the work.

Key takeaways:

- Hybrid search beats pure vector search in production

- Metadata filtering is your secret weapon

- Observability is non-negotiable

- Cost optimization requires work at every layer

- The gap between prototype and production is enormous

Start simple, measure everything, and iterate based on real user behavior. RAG systems that work at scale are built incrementally, not in one giant leap.

Remember: the best RAG system is one that ships and improves over time, not the perfect system that never launches.