Blog post: JSON vs TOON — the AI-era data format comparison

AI & Data Formats

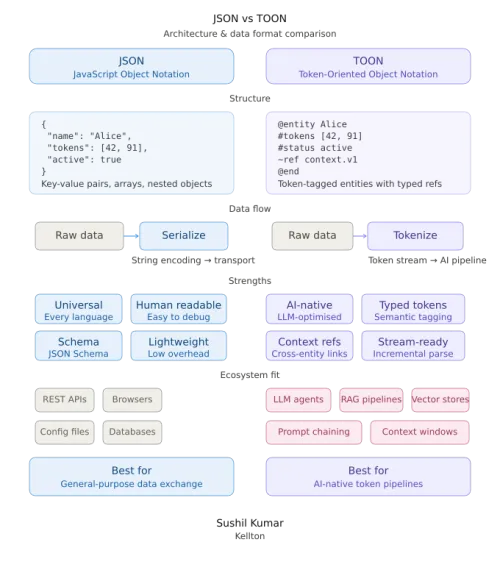

JSON is great. But TOON is built for the AI era.

Why Token-Oriented Object Notation is quietly saving developers 30–60% on LLM costs — and why you should start paying attention.

For nearly two decades, JSON has been the universal language of data on the web. It's everywhere — APIs, configs, databases, you name it. Clean, readable, widely supported. But as AI systems and large language models become central to how we build software, a quiet problem has emerged: JSON is expensive.

Not in dollars directly — but in tokens. And tokens are how LLMs charge you. Enter TOON (Token-Oriented Object Notation), a new format released in late 2024 that's purpose-built for the way AI systems actually consume data. Let's break down what TOON is, how it differs from JSON, and when you should use it.

The problem

Why JSON becomes costly in AI workflows

JSON was designed for human readability and web interchange — not for LLM token efficiency. Every piece of syntax counts as tokens: every brace {}, every bracket [], every quote, every repeated key name. When you pass a list of 100 user records to an LLM, you're repeating the field names — id, name, role, email — 100 times.

100 employee records in JSON ≈ 2,500 tokens. At GPT-4 pricing, that structural overhead alone costs real money at scale — and consumes context window space that could hold actual useful data.

Token savings

How the numbers compare

Official benchmarks from the TOON project — tested across GPT, Claude, Gemini, and Grok — show consistent savings across dataset types.

Key features

What makes TOON different by design

Schema declared once

Column headers like {id,name,role} are written a single time, not repeated per record. The core idea that drives all token savings.

YAML-style indentation

Uses indentation instead of curly braces for nested structures. Cleaner to read, fewer punctuation tokens consumed.

Explicit array length

Brackets include count: users[3]. This metadata helps LLMs validate structure and reduces hallucinations during parsing.

Minimal quoting

Strings are only quoted when absolutely necessary. hello world needs no quotes — inner spaces are fine. Saves tokens without ambiguity.

Lossless conversion

TOON ↔ JSON conversion is fully lossless in both directions. You can convert at prompt time and convert back when needed — no data is lost.

Nesting supported

TOON still handles hierarchical and nested data — it's not just flat tables. Think of it as JSON with better defaults for repetitive records.

Side by side

JSON vs TOON at a glance

JSON Established

- Universal — works everywhere

- Rich tooling ecosystem

- Native to APIs & web services

- Ideal for deeply nested objects

- Best for LLM output / function calling

- Verbose — repeats keys every record

- High token consumption at scale

- Structural noise adds prompt cost

TOON AI-optimized

- 30–60% fewer tokens than JSON

- Higher LLM accuracy on retrieval

- Perfect for tabular / uniform data

- Supports nesting + lists

- Explicit schema reduces hallucinations

- Not yet standard for public APIs

- Nascent tooling ecosystem

- Not ideal for LLM output parsing

Cost & scale

When does TOON actually save you money?

Token savings sound nice in isolation, but the real value compounds at scale. A single API call with 10 records? Savings are negligible. But consider a production system making thousands of LLM calls per day, each passing database records or analytics results.

1,000 daily agent interactions × 100 records each = millions of tokens saved monthly. At current GPT-4 pricing, structural overhead alone becomes a meaningful line item on your cloud bill.

TOON is particularly powerful in multi-agent scenarios: reduced context window pressure allows more agents to collaborate within model constraints, faster inference from smaller inputs, and lower cost that scales linearly with agent count. The format also shines in RAG pipelines, where fitting more records per prompt directly improves retrieval quality.

When to use what

The practical decision guide

Use JSON when...

Use TOON when...

Architecture

Bottom line

JSON won the web. TOON is winning the AI layer.

These two formats aren't at war — they're complementary tools. JSON remains the universal workhorse for APIs, configuration, and web services. It isn't going anywhere. But for developers building AI-native applications, TOON offers a meaningful edge: cheaper prompts, better accuracy, and more data per context window.

The optimal strategy? Use JSON for your public APIs and external integrations. Convert to TOON at prompt time when sending data to LLMs. Convert back to JSON for output parsing. The conversion is lossless, one-time tooling investment pays ongoing dividends, and the engineering tradeoff is straightforward.

In 2025 and beyond, the developers who understand both formats — and know when to use each — will have a genuine cost and performance advantage in AI-driven systems.

TOON is still a relatively new format (released October 2024), and its ecosystem is growing rapidly. Independent academic validation is still catching up to the official benchmarks — but early adoption is accelerating across AI workflows, and the core idea is sound: stop repeating yourself when talking to LLMs.